Stable Diffusion: Difference between revisions

StableTiger3 (talk | contribs) No edit summary |

StableTiger3 (talk | contribs) mNo edit summary |

||

| (8 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

[[File:SDModel.png|thumb|Stable Diffusion Model]] | |||

[[File:Donuts.png|left|thumb]] | |||

[[File: | |||

== Introduction == | == Introduction == | ||

| Line 9: | Line 8: | ||

== Development and Collaboration == | == Development and Collaboration == | ||

Stable Diffusion emerged as a result of collaborative efforts between the renowned CompVis Group at Ludwig Maximilian University of Munich and Runway, a leading AI research and development platform. The project received essential computational support from Stability AI, while data contributions from non-profit entities played a pivotal role in its development. | Stable Diffusion emerged as a result of collaborative efforts between the renowned CompVis Group at Ludwig Maximilian University of Munich and Runway, a leading AI research and development platform. The project received essential computational support from [[Stability AI]], while data contributions from non-profit entities played a pivotal role in its development. | ||

== Features and Accessibility == | == Features and Accessibility == | ||

| Line 19: | Line 18: | ||

== Core Mechanism == | == Core Mechanism == | ||

Stable Diffusion operates as a deep generative neural network rooted in the principles of latent diffusion. Its programming and essential model components are publicly accessible, making it compatible with mainstream consumer hardware equipped with a GPU boasting a minimum of 8 GB VRAM. This focus on accessibility and efficiency sets it apart from its predecessors. | Stable Diffusion operates as a deep generative [[neural network]] rooted in the principles of latent diffusion. Its programming and essential model components are publicly accessible, making it compatible with mainstream consumer hardware equipped with a GPU boasting a minimum of 8 GB VRAM. This focus on accessibility and efficiency sets it apart from its predecessors. | ||

== Impact and Future Developments == | == Impact and Future Developments == | ||

Latest revision as of 17:40, 19 August 2023

Introduction

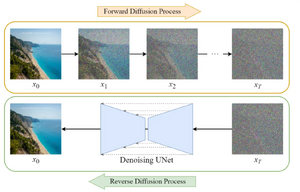

Stable Diffusion, introduced in 2022, stands as a remarkable text-to-image deep learning model that harnesses the power of diffusion methodologies. This cutting-edge AI system, rooted in latent diffusion mechanisms, is designed to excel in the creation of AI-generated images, ranging from lifelike photorealism to exquisite artistic interpretations.

Development and Collaboration

Stable Diffusion emerged as a result of collaborative efforts between the renowned CompVis Group at Ludwig Maximilian University of Munich and Runway, a leading AI research and development platform. The project received essential computational support from Stability AI, while data contributions from non-profit entities played a pivotal role in its development.

Features and Accessibility

An outstanding attribute of Stable Diffusion is its accessibility, offering users the freedom to operate the software on their personal computers. Unlike its predecessors that relied on cloud-based access, Stable Diffusion breaks new ground by providing a more user-friendly approach, sparking a surge of enthusiasm among creators and enthusiasts alike.

The software is primarily designed for generating intricate visuals based on text prompts. Its versatility, however, extends beyond text-to-image translation. Stable Diffusion is equally adept at tasks such as inpainting, outpainting, and generating image translations guided by textual cues. This flexibility has been a major driving force behind its growing popularity.

Core Mechanism

Stable Diffusion operates as a deep generative neural network rooted in the principles of latent diffusion. Its programming and essential model components are publicly accessible, making it compatible with mainstream consumer hardware equipped with a GPU boasting a minimum of 8 GB VRAM. This focus on accessibility and efficiency sets it apart from its predecessors.

Impact and Future Developments

Since its introduction, Stable Diffusion has sparked a wave of creativity and innovation within the AI community. Its inclusivity, combined with its efficiency and cost-effectiveness, has empowered users to explore new horizons in AI-generated imagery. The availability of the model's programming and components has led to the development of various free resources and tools that revolve around its capabilities.

As the field of AI and deep learning continues to evolve, Stable Diffusion is poised to play a significant role in shaping the future of creative AI applications and expanding the accessibility of advanced AI technologies to a wider audience.

For further discussions and updates, please visit the discussion page.